Random Forest is a powerful machine learning algorithm that is widely used in a variety of different settings. It is a good choice for many tasks because it is relatively robust to overfitting and can handle data with a high degree of variability. Additionally, Random Forest is easy to implement and can be used in conjunction with other machine learning algorithms to improve results.

What is a random forest?

In order to explain to you if this is the right choice for you and how it works, it is important to guide you to what this algorithm actually is.

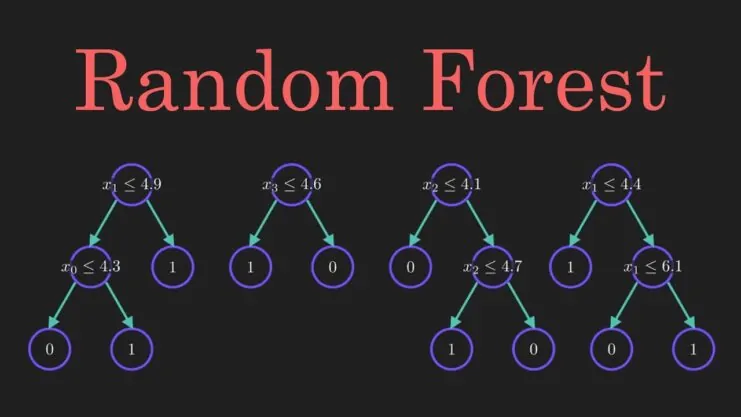

Random forests are a type of machine learning algorithm that is used for both classification and regression tasks. The algorithm works by creating a set of decision trees, each of which is trained on a random subset of the data. The predictions from each tree are then combined to form the final prediction. While a single decision tree can be highly inaccurate, the combination of many trees can result in a much more accurate model.

Moreover, random forests are relatively robust to overfitting, meaning that they can still make good predictions even if the training data is not representative of the test data. For these reasons, random forests have become one of the most popular machine learning algorithms in recent years.

When should you use random forest?

The fact is that this is a powerful tool for predictive modeling. However, it is not always the best choice for every problem.

In general, it is well suited for problems with a large number of features, or where the relationship between features and the target variable is non-linear. However, there are some situations where a random forest is not the best choice.

For instance, if the dataset is very small, or if the relationship between features and the target variable is simple and linear, then other algorithms may be more accurate. In addition, Random Forest can be less effective when there are outliers in the data, or when the target variable is highly imbalanced.

As with any machine learning algorithm, it is important to evaluate several different models on your dataset before choosing the best one.

Why is a Random Forest a good choice?

You must be wondering why the Random Forest is a good choice, and there are several answers to that question.

Random Forest is a good choice for many reasons. First, it is a very accurate classifier. It is often the most accurate classifier available. Second, it is very efficient. It can handle large data sets and high-dimensional data sets with ease. Third, it will not overfit your data if you have a large data set. Finally, it is easy to use. You do not need to tune any parameters to use Random Forest. All you need to do is supply the data and the classifier will do the rest.

It is a good choice for many different tasks. It is often used in classification tasks, such as identifying whether an email is a spam or not. Or, it can be used in regression tasks, such as predicting the price of a stock. Also, it can be used in feature selection tasks, such as selecting the most important features in a data set.

If you are still not sure, we believe that Serokell will reassure you.

How does the Random Forest algorithm work?

Random Forests are a type of machine learning algorithm that is used for both regression and classification tasks. The algorithm works by creating a large number of decision trees, then combining the results of all the trees to make a final prediction.

When used for regression, the algorithm averages the predictions of all the trees to produce a final result. When used for classification, the algorithm looks at the majority vote of all the trees to decide which class a new data point belongs to. By creating multiple trees, each with different random subsets of data, the algorithm can create a more robust model that generalizes better to new data.

Is it easy to use?

Yes, it is easy to use. It is one of the easiest machine learning algorithms to use. There are only a few parameters that need to be tuned, and the algorithm can be used with both classification and regression tasks.

Don’t worry, it’s easy! There are four steps you need to follow and they are:

Step One

First, you need to select a data set to train your model on. The data set should be in a CSV format and should have at least one column that can be used for predicting the target variable.

Step Two

Next, you need to choose the type of Random Forest algorithm that you want to use. There are several different options, so be sure to read the documentation carefully.

Step Three

Then, you need to decide how many trees you want to create. The more trees you create, the more accurate the model will be, but it will also take longer to run.

Step Four

Finally, you need to fit the model and make predictions on new data sets.

How many trees can we use in a Random Forest?

The number of trees that you can use in a Random Forest depends on the size and complexity of your data set. If you have a large and complex data set, you will need to use more trees. If you have a small and simple data set, you will be able to get away with using fewer trees. In general, it is best to start with a small number of trees and later increase the number if you find that the model is accurate enough.

Conclusion

Random Forest is a powerful machine learning algorithm that can be used for both classification and regression tasks. It is easy to use and we believe that it can be very helpful to you. However, it is important to choose the right data set and parameters for your task and that’s why you have to choose carefully. But we hope that we helped you at least a little with that decision.